IQ test results are supposed to show how well someone can use information and logic to answer questions. They’re also used to identify students who would benefit from extra help in specific areas.

The initial IQ tests were developed in 1905 by psychologist Alfred Binet. He created a series of tests for each year of a child’s development and calculated a “mental age” compared to the actual chronological age. He then multiplied this score by 100 to get what’s called an IQ.

Reliability

IQ test results are used to gauge an individual’s intellectual capacity. They measure a range of skills, from vocabulary to arithmetic and general knowledge.

In general, Online IQ test has high statistical validity and reliability. They correlate with various life outcomes, such as SAT scores and school grades.

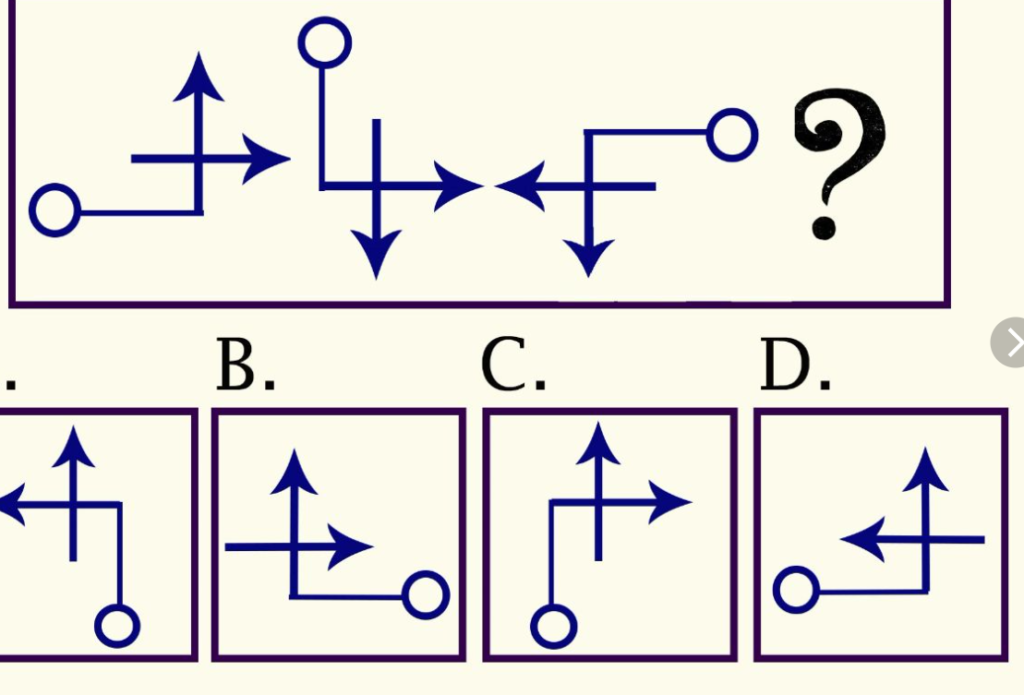

There are many different IQ tests, with varying levels of difficulty. Some are based on abstract-reasoning problems, while others focus on visual and verbal reasoning abilities.

Reliability can be assessed by two or more independent raters who score the test items and then compare their scores. This is called inter-rater reliability and is often measured by calculating the correlation between the scores of the raters.

Another form of reliability is parallel-forms reliability, which is gauging the consistency of scores across test items that are designed to measure the same construct. This is done by randomly dividing the test content into two separate tests and having them administered to the same subjects at the same time.

Validity

IQ test results have been used to justify discrimination, particularly against minorities and the disabled. However, these tests are no longer used for this purpose.

Rather, they are used to evaluate job applicants and for research purposes. They are also used to study distributions of psychometric intelligence in populations and correlations between IQ and other variables, such as economic status.

A key element in determining the validity of a test is whether it measures what it purports to measure, which is called content validity. It is also important to look at the size of the sample, which determines how reliable a test will be in the long run.

The validity of iq test results can be questioned in a number of ways, including the way they are administered and the motivation of the person taking them. For example, some people may be motivated to perform well on the test, while others may not try their best.

Norms

IQ test results are based on norms or standard scores that were established by giving the tests to large populations of people at different age levels. These standards represent the general population as accurately as possible.

The norms of iq test results are important because they provide us with referential scores by which we can compare our own individual iq test score. They also allow us to see the distribution of iq test results over time.

The norms of iq test results tend to form a bell curve with approximately 95% of the population scoring within two standard deviations from the mean score of 100. This means that a score between 85 and 115 is considered average, whereas a score of over 130 is considered very high.

Scoring

IQ tests measure an individual’s cognitive ability, as defined by psychologists. Some tests assess memory, while others are concerned with fluid intelligence, which is behind those “aha” moments when you suddenly connect the dots to understand something bigger.

Many of these tests are based on a single factor analytic procedure (Deary, 2001; Sternberg, 2006). Using this method, the performance on each item of a particular mental ability test converges to a single factor called the g-factor, which represents an individual’s general intelligence.

However, there is a critical interpretive gap that plagues these tests and their real-world applications (Neisser et al., 1996). This gap adversely impacts critical factors that shape human development and access to opportunities in modern meritocratic societies.